After the debacle of the last report, the spotlight is back on climate change with the release of the new (fifth) assessment by the Intergovernmental Panel on Climate Change (IPCC). The financial crisis, combined with credibility issues over the last report as a result of errors found after publication, has meant that the issue has taken a back seat in recent years. This assessment is drawn from the work of 209 authors with 50 review editors from 39 countries and more than another 600 contributors from across the global scientific community. It will hopefully dispel the nut job climate change deniers and allow for a renewed focus on concrete actions that can be taken to address climate change issues.

The latest publication from IPCC yesterday is actually a summary of headline statements and a “summary for policymakers” from the IPCC Working Group I which makes an assessment of the physical scientific aspects of the climate change. A full draft report will be published in a few days and is expected to be finalised by late 2013 or early 2014. The two other working groups, creatively named IPCC Working Group II and III, are due to publish their reports in 2014 and are charged with assessments of vulnerability to climate change and options for mitigating the effects respectively.

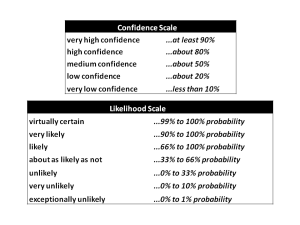

The language used by IPCC is important. For some reason they use a number terms for uncertainty, as follows:

The strongest (and most obvious) statement is:

“Warming of the climate system is unequivocal, and since the 1950s, many of the observed changes are unprecedented over decades to millennia. The atmosphere and ocean have warmed, the amounts of snow and ice have diminished, sea level has risen, and the concentrations of greenhouse gases have increased.”

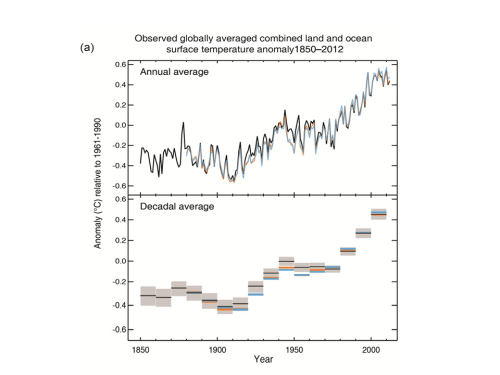

It does sound silly that it has taken us this many years for a statement like that to be made unequivocally but that’s the world we live in. A graph from the summary from WG I is below.

A number of the headline statements that are being reported in the press are included below.

Very high confidence is assigned to the ability of climate models “to reproduce observed continental-scale surface temperature patterns and trends over many decades, including the more rapid warming since the mid-20th century and the cooling immediately following volcanic eruptions“.

High confidence is assigned to each of the following:

- “Ocean warming dominates the increase in energy stored in the climate system, accounting for more than 90% of the energy accumulated between 1971 and 2010.“

- “Over the last two decades, the Greenland and Antarctic ice sheets have been losing mass, glaciers have continued to shrink almost worldwide, and Arctic sea ice and Northern Hemisphere spring snow cover have continued to decrease in extent.“

- “The rate of sea level rise since the mid-19th century has been larger than the mean rate during the previous two millennia.“

Medium confidence is assigned to the assertion that in “the Northern Hemisphere, 1983–2012 was likely the warmest 30-year period of the last 1400 years.”

Other strong statements include the following section (by the way, I think extremely likely is yet another new term meaning over 95% probability!):

“It is extremely likely that human influence has been the dominant cause of the observed warming since the mid-20th century. Continued emissions of greenhouse gases will cause further warming and changes in all components of the climate system. Limiting climate change will require substantial and sustained reductions of greenhouse gas emissions.”

The summary documents also refer to the scenarios used, including four new scenarios, to assess impacts on items including CO2, temperature, sea levels and temperatures. The full draft assessment and next year’s WG II and III reports will likely give more detail.

There is a few intriguing assertions in the report although they are subject to final copyedit.

Medium confidence is used to describe the assessment of whether human actions have resulted in an increase in the frequency, intensity, and/or amount of heavy precipitation. For the early 21st century and the late 21st century it is assessed that increased precipitation is likely over many land areas and very likely over most of the mid-latitude land masses and over wet tropical regions respectively.

Low confidence is used to describe the assessment of whether human actions have resulted in an increase in intense tropical cyclone activity and is also used to describe the likelihood of a change in intense tropical cyclone activity in the early 21st century.

The more detailed draft report from WG I will be interesting reading.