It has been a while since I posted on dear old Solvency II (here). As highlighted in the previous post on potential losses, the insurance sector is perceived as having robust capital levels that mitigates against the current pricing and investment return headwinds. It is therefore interesting to look at some of detail emerging from the new Solvency II framework in Europe, including the mandatory disclosures in the new Solvency and Financial Condition Report (SFCR).

The June 2017 Financial Stability report from EIOPA, the European insurance regulatory, contains some interesting aggregate data from across the European insurance sector. The graph below shows solvency capital requirement (SCR) ratios, primarily driven by the standard formula, averaging consistently around 200% for non-life, life and composite insurers. The ratio is the regulatory capital requirement, as calculated by a mandated standard formula or a firm’s own internal model, divided by assets excess liabilities (as per Solvency II valuation rules). As the risk profile of each business model would suggest, the variability around the average SCR ratio is largest for the non-life insurers, followed by life insurers, with the least volatile being the composite insurers.

For some reason, which I can’t completely comprehend, the EIOPA Financial Stability report highlights differences in the SCR breakdown (as per the standard formula, expressed as a % of net basic SCR) across countries, as per the graph below, assumingly due to the different profiles of each country’s insurance sector.

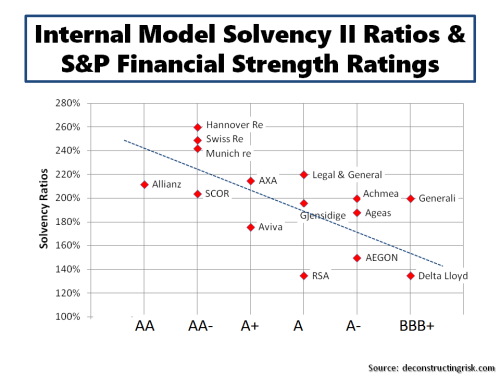

A review across several SFCRs from the larger European insurers and reinsurers who use internal models to calculate their SCRs highlights the differences in their risk profiles. A health warning on any such comparison should be stressed given the different risk categories and modelling methodologies used by each firm (the varying treatment of asset credit risk or business/operational risk are good examples of the differing approaches). The graph below shows each main risk category as a percentage of the undiversified total SCR.

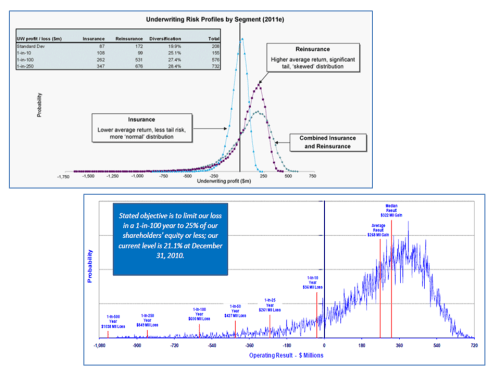

By way of putting the internal model components in context, the graph below shows the SCR breakdown as a percentage of total assets (which obviously reflects insurance liabilities and the associated capital held against same). This comparison is also fraught with difficulty as an (re)insurers’ total assets is not necessarily a reliable measure of extreme insurance exposure in the same way as risk weighted assets is for banks (used as the denominator in bank capital ratios). For example, some life insurers can have low insurance related liabilities and associated assets (e.g. for mortality related business) compared to other insurance products (e.g. most non-life exposures).

Notwithstanding that caveat, the graph below shows a marked difference between firms depending upon whether they are a reinsurer or insurer, or whether they are a life, non-life or composite insurer (other items such as retail versus commercial business, local or cross-border, specialty versus homogeneous are also factors).

Initial reactions by commentators on the insurance sector to the disclosures by European insurers through SFCRs have been mixed. Some have expressed disappointment at the level and consistency of detail being disclosed. Regulators will have their hands full in ensuring that sufficiently robust standards relating to such disclosures are met.

Regulators will also have to ensure a fair and consistent approach across all European jurisdictions is adopted in calculating SCRs, particularly for those calculated using internal models, whilst avoiding the pitfall of forcing everybody to use the same assumptions and methodology. Recent reports suggest that EIOPA is looking for a greater role in approving all internal models across Europe. Systemic model risk under the proposed Basel II banking regulatory rules published in 2004 is arguably one of the contributors to the financial crisis.

Only time will tell if Solvency II has avoided the mistakes of Basel II in the handling of such beautiful models.